Over the last few months, I’ve been interested in data zooming, where a finite range of data (say 0-1) can be magnified and explored in greater detail. We are all familiar with the paradigm. In Microsoft Word or Photoshop, for example, you zoom the view (e.g. 125%) and in the same amount of screen real estate, you see a smaller region (of words or pixels) in greater detail.

Zooming is also true for any stream of numbers. In software we can map a fader to move between 0-1 and on a similar fader (or the same fader), map the range to 0.0-0.1 (1/10 of its original range).

While a simple concept, data zooming can be a powerful tool. Magnification embodies focus, detail, and exploration. If sound is data or controlled by data, then magnification enables us to literally ‘zoom in’ on audio. Data zooming, then, becomes a way to explore sound space.

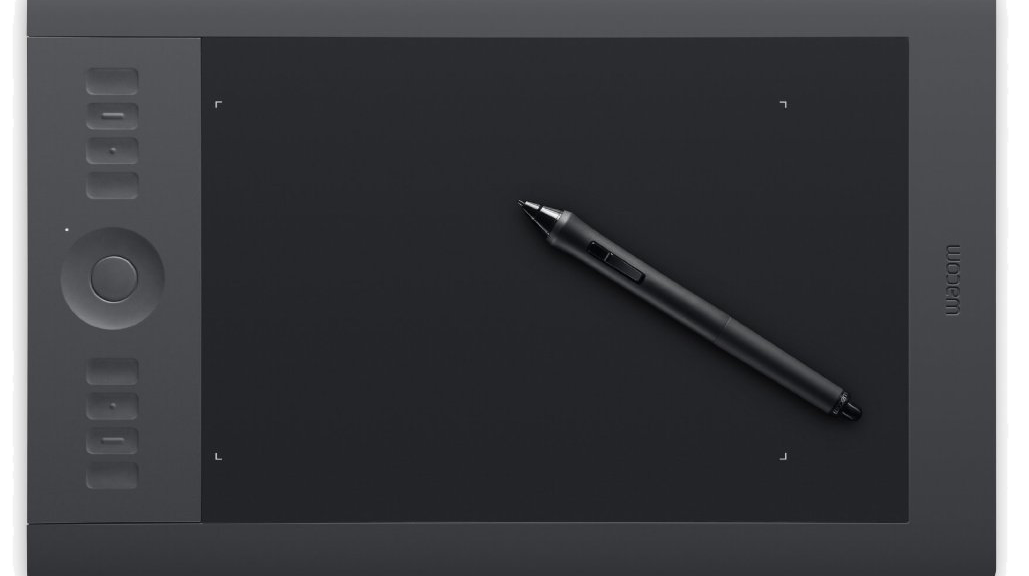

Inspired by Palle Dahlstedt [1], I set out to rapid prototype a way to zoom in on a data stream for live performance. I chose the Wacom tablet since I use this often in live performance with Kyma. I was most fascinated with !PenX (0-1 range), which I often map to the TimeIndex of a sound (0@start of sound, 1@end of sound). Regardless of audio sample length, PenX can be set so 0 will always be the beginning of the sample and 1 will always be the end of the sample. (note: TimeIndex range expects -1 to 1, but PenX range can be easily shifted to fit)

The basic gist of data zooming is that we need two controllers to do the job: a continuous fader (e.g. !PenX) and a button to trigger the zoom (e.g. !PenButton2). The pen/fader equates to the values that we read and in our case, the values that we map onto the TimeIndex of an audio sample.

Data zoom works like this: whenever the zoom button is depressed, we take the current location of the fader and “zoom” in to the location. With zoom enacted, the fader moves at a smaller scale around this location point. The magnitude of zoom can be altered, but for the purposes of this example, I worked with a 10x zoom magnitude. Before jumping into Capytalk and Kyma, let’s walk through my initial prototype inside Max/MSP. The math is the same.

The range of initial values (!PenX) are between 0-1. When the zoom button is depressed, we need to save the current location of !PenX and use as our new zoom location (offset). In addition, we need to alter the range in which !PenX moves through data (scale). I’ve uploaded the Max prototype patch and Kyma file here.

In order to take into account the centering of the Pen at the current zoom level, I had to add an additional offset that shifts the offset to the actual point of the pen on the tablet. The Max prototype includes multiple zoom levels at powers of 10.

With Kyma, I used the same basic concept. When a button is pressed (!PenButton2), we zoom to the current value of X (sampleAndHold) and magnify the boundaries of !PenX from 0-1 to the zoom order (exponent of 10). Because 10^0 = 1, we can use a button’s press (binary 0 and 1) to create a simple on/off zoom in Kyma.

Here’s the Capytalk that achieves data zooming:

(!PenX / (10 ** !PenButton2)) + ((((!PenButton2) sampleAndHold: !PenX) – (((!PenButton2) sampleAndHold: !PenX) / (10 ** !PenButton2))) * !PenButton2)

First, !PenX is scaled down when !PenButton2 is depressed (power of 10). We then add back (offset) PenX’s location from when PenButton2 was pressed. In order to take account of the actual pen location on the tablet, we have to subtract PenX’s sampled location at the same order of the zoom. Lastly, we multiply this offset by !PenButton2 so that when the button becomes 0 (zoom off), the zoom offset no longer effects PenX’s initial, non-zoom state. Thus, with PenButton2 off, the Capytalk is just (!PenX / 1) + 0. Below is a short video sounding the process.

Download the Kyma and Max files.

[1] Palle Dahlstedt. “Dynamic Mapping Strategies for Expressive Synthesis Performance and Improvisation.” in Computer Music Modeling and Retrieval. Genesis of Meaning in Sound and Music. 5th International Symposium, CMMR 2008 Copenhagen, Denmark, May 19-23, 2008.